Most teams using AI in operations hit the same problem sooner or later. A task looks simple. The system gets close, then fails in a familiar way. You try again. You add a sentence. You correct the output manually. The next run misses in roughly the same place.

That loop is frustrating. It is also useful.

Repeated frustration usually means the AI workflow is telling you something. The task may still be too vague. The model may have too much freedom. The review step may come too late. The system may not have enough context to succeed reliably. Frustration inversion means treating that pattern as workflow design feedback instead of treating each bad run as a one-off irritation.

When AI Workflow Failure Becomes Signal

One weak result does not tell you much. AI systems still have variance. A single bad answer may be random noise, weak source material, or a bad pass.

The signal appears when the failure repeats.

If a model keeps writing in the wrong tone, the issue may be missing editorial rules. If it keeps overreaching on research, the issue may be weak source constraints. If it keeps damaging the same part of a codebase, the issue may be weak task decomposition, missing tests, or poor repo grounding. If it keeps making bad judgment calls, the issue may be that the task should advise rather than act.

At that point the frustration is evidence. You already paid for it. The useful move is to extract the lesson.

What Repeated AI Workflow Failure Usually Means

Most recurring AI failures point to one of a few structural problems.

- The task was underspecified.

- The system had too much freedom where tighter rules were needed.

- The context package was missing something important.

- The review step happened after too much damage was already possible.

- The workflow asked the model to make a call it was not well positioned to make.

Teams often react by adding more prompt text. Sometimes that helps. Often it does not. A longer instruction is not the same as a better workflow.

If an agent keeps choosing the wrong files, give it a narrower file boundary or a verification step before edits. If a content workflow keeps producing inflated copy, ban the patterns you do not want and encode the tone you do want. If a research workflow keeps mixing strong evidence with weak claims, require explicit sourcing and confidence language. If a support workflow keeps escalating too late, move the escalation threshold earlier.

These are workflow design changes. They usually matter more than one more irritated retry.

Add Guard Rails Before The Next Run

After a failed run, most teams ask, “How do I fix this output?”

A better question is, “What instruction, guard rail, checklist, eval, or handoff should have existed before this run started?”

That shift moves the work from cleanup to design. One manual correction fixes one result. One good rule can prevent a whole category of bad results from recurring. One review gate can stop a weak workflow from causing visible damage. One tighter task boundary can turn a messy job into a reliable one.

This is how AI work becomes operational. The team stops reacting to every bad run emotionally and starts using recurring failure as input for system improvement.

A Practical Example

Take a team using AI to prepare client-ready proposals. The drafts come back quickly, but they keep overpromising delivery speed, using generic claims, and missing commercial caveats that the sales lead always adds by hand.

The wrong response is to keep correcting those documents manually forever.

The better response is to redesign the workflow:

- add approved positioning language

- add banned phrases and unsupported claim rules

- require a section for delivery assumptions and dependencies

- force the model to separate confirmed scope from inferred scope

- add a final human approval step before anything client-facing leaves the system

Now the frustration changed the . That is the useful outcome.

The same logic works in engineering, research, operations, and support. If the same failure keeps appearing, the job is no longer to complain about it. The job is to contain it.

Prompt Fix Or Workflow Redesign

One of the most important judgment calls in AI work is deciding whether a problem belongs in the prompt or in the workflow.

If the failure is small and local, the fix may belong in the prompt. A missing output format, a missing audience definition, or an omitted constraint can often be corrected directly.

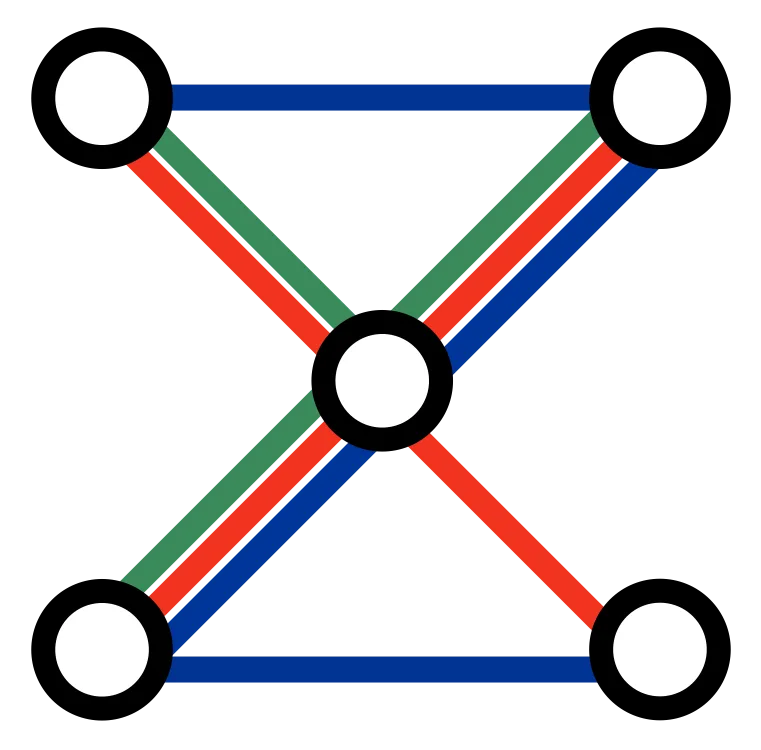

If the failure keeps returning across runs, people, or models, it usually belongs in the workflow. That might mean a reusable , a checklist, a better system prompt, a more structured input format, a narrower tool boundary, or an approval gate.

Many teams stay trapped in local prompt repair long after the real problem became structural. That is one reason workflows are useful in AI operations. When instructions, assets, validation, and review steps live in files and scripts, the next run can benefit from the last failure.

Do Not Turn Every Friction Point Into Bureaucracy

Not every bad run deserves a new policy. Some failures are random. Some are cheap to fix. Some happen so rarely that a heavy control would cost more than the mistake.

The goal is not to surround every workflow with needless rules. The goal is to identify repeatable, expensive, or risky failure modes and address them at the right level.

Teams need to ask:

- Does this happen often enough to matter?

- Is the cost of repetition higher than the cost of a new rule?

- Should the system be constrained more tightly, or should the task be decomposed differently?

- Does this step need review, or should the model stop making this call altogether?

Good AI operations depend on that pruning. A system buried under pointless constraints becomes slow and brittle. A system with no AI wastes time in a different way.

The Habit That Compounds

When frustration shows up, pause before you retry.

Look for the pattern. Name it. Decide whether the issue lives in task framing, context, workflow structure, permissions, or review. Then make a change that improves the next run, not only the current one.

Teams that keep extracting rules from repeated failure get calmer, faster, and more reliable over time. Teams that keep repairing the same output by hand stay busy without getting much better.

Frustration is normal in AI work. Repeating the same frustration indefinitely is optional.

If your team is using AI across content, engineering, research, or internal operations and still spending too much time on avoidable retries, contact us . We help teams turn loose AI usage into workflows with clearer constraints, better handoffs, and less repeated friction.