There is a small robot in the corner of this website. It is there to answer questions, point people to the right page, help qualify what they are looking for, and occasionally nudge a promising conversation toward contact. Because this site is about AI and agentic systems, one obvious question follows quickly: why is that robot not simply a live LLM chatbot?

The short answer is that we chose not to do that yet.

The longer answer is more interesting. We are using our own agentic webmaster as a guard rail around the site, and part of that workflow is to inject content specifically for the bot. We want the robot to know the services, ideas, FAQs, blog posts, and navigation paths of this site in a structured way. We want it to be useful. But we also want it to stay fast, cheap to run, easy to reason about, and simple to maintain.

That trade-off led us somewhere we actually like quite a lot: a modern site bot with a slightly old-school soul.

Before large language models, there were text adventures, MUDs, parser-driven role playing games, and a whole class of systems that felt alive because the rules were clever, the content was well prepared, and the interaction design respected the imagination of the player. Many of us who have been building software since the last century still have a deep fondness for those systems. They did not pretend to understand everything. They only had to understand enough, in the right way, to make the interaction feel rewarding.

That is very close to what this robot does.

At runtime, the bot is deliberately simple. It searches a prepared knowledge layer, matches what you asked against site content, ranks likely answers, and responds with relevant links, suggestions, and next steps. There is no live model call behind every message. No token meter spinning in the background for routine site questions. No extra moving parts just to answer something that the website already knows.

The important point is that simple does not mean dumb. We still use AI where it pays off. We use it upstream.

As part of the site update process, we maintain an internal FAQ layer with generated question-answer pairs derived from our pages, blog posts, services, and curated chat overrides. In other words, we prepare the knowledge before the visitor arrives. We can shape likely questions, tighten answers, add follow-up prompts, and connect each answer to the right pages. Some of that structure is generated automatically from content. Some of it is refined through our -driven workflow. And yes, some of the rules and patterns behind it were created with AI as well. We are not anti-LLM. We are simply using LLMs where they create leverage instead of cost.

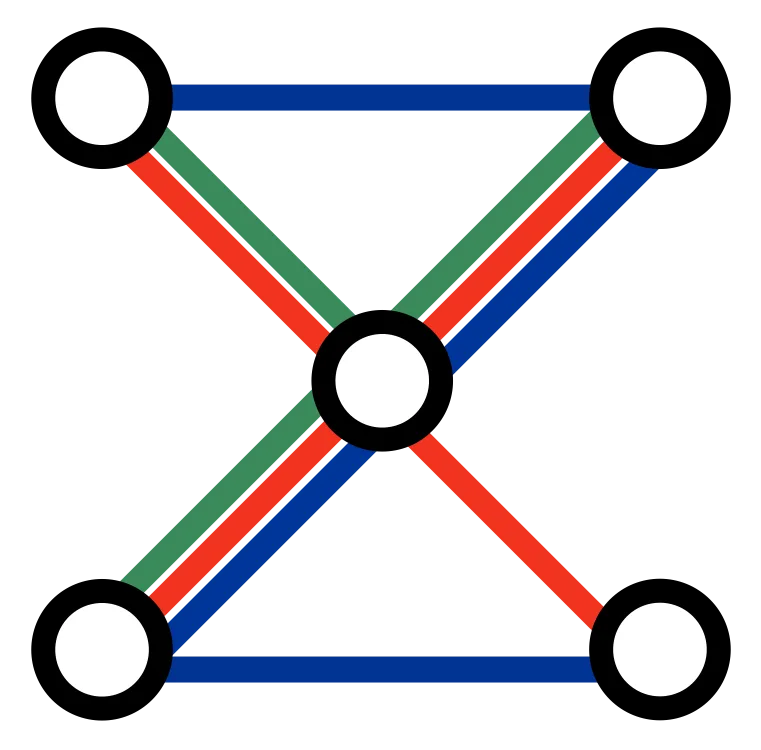

This is why we say the robot is not using LLMs yet, but the system around it absolutely benefits from them. The intelligence is front-loaded into the content pipeline. The runtime stays deterministic.

That architecture has a few practical advantages. First, it keeps response times snappy. Second, it avoids paying model costs for every visitor interaction. Third, it reduces operational complexity because the behavior is easier to test, inspect, and tune. If a page changes, our update process can regenerate the hidden chat knowledge, keep the bot aligned with the latest content, and avoid turning the website into a fragile demo.

We also gave ourselves a small engineering gift: a caching hack that skips regeneration work when content has not changed. The bot knowledge builder hashes source pages and reuses cached entries for unchanged material. That means the skill-driven update flow stays efficient even as the site grows. Years of articles, service pages, press releases, deep pages, and FAQs do not need to be reprocessed from scratch every time. The system only refreshes what actually moved.

This becomes especially useful once a website has real history. Most companies are sitting on far more content than they actively use: old blog posts, announcements, campaign pages, case studies, long-form product explanations, and niche FAQ material that still contains valuable answers. A tailored site bot can unlock all of that. It can surface relevant material faster, drive deeper engagement, run lightweight surveys to sharpen intent, and help route people toward the right offer or conversation without making them hunt through navigation menus.

On this site, that layer goes beyond simple retrieval. The robot can also gather a few structured details, help a visitor clarify what they need, and move toward a cleaner handoff. This is where the old text-adventure influence becomes especially fun. Good guided conversation is not only about free-form language. It is about pacing, hints, branching, and knowing when to offer the next meaningful move.

Then there is the analytics side, which matters just as much as the conversation itself. Our bot is deeply integrated with our own analytics platform. When a visitor has explicitly accepted optional cookies, we can analyze questions, responses, navigation paths, and conversation patterns inside our self-hosted environment. That helps us understand what people are looking for, which parts of the site are doing real work, which topics create friction, and where the content itself should improve.

This is useful for more than bot tuning. It tells us what the audience cares about, what kinds of visitors are arriving, which questions keep repeating, and where there may be unmet demand. That can inform content strategy, page structure, offer design, and future experiments. In other words, the robot is not only a helper for visitors. It is also an instrument for learning.

The important boundary is privacy. We are not interested in creepy surveillance theatre. We are respecting GDPR, using consent properly, and keeping these conversations inside our self-hosted stack rather than spraying them across a chain of third-party services. The point is to learn enough to improve the site and the experience, not to build an ad-tech monster.

Over time, we may decide that a live LLM belongs in this loop. There are cases where it clearly would. But for this stage of the project, the more elegant answer was to do the simpler thing well. A prepared knowledge layer. Smart rules. Skill-driven updates. Efficient caching. Good analytics. Strong .

Sometimes a bit of clever coding is all you need.

And if you like this pattern, we can help you build one too. We can tailor a similar bot to your website, connect it to your content base, shape the internal FAQ, align it with your tone and offers, and feed the resulting learnings back into your site operations. If your company is sitting on years of useful material that people rarely find, this is one of the cleanest ways to make that knowledge work again. Curious how this feels in practice? Try the robot on this site and see where it takes you.