As of March 19, 2026, the field of agentic systems is moving fast enough that many teams see a blur of demos, names, and screenshots but still do not have a clean way to compare what these systems actually are. The useful distinction is usually not “which model is smartest” but “what kind of operating surface does this tool provide, how much autonomy does it have, and what controls sit around it?”

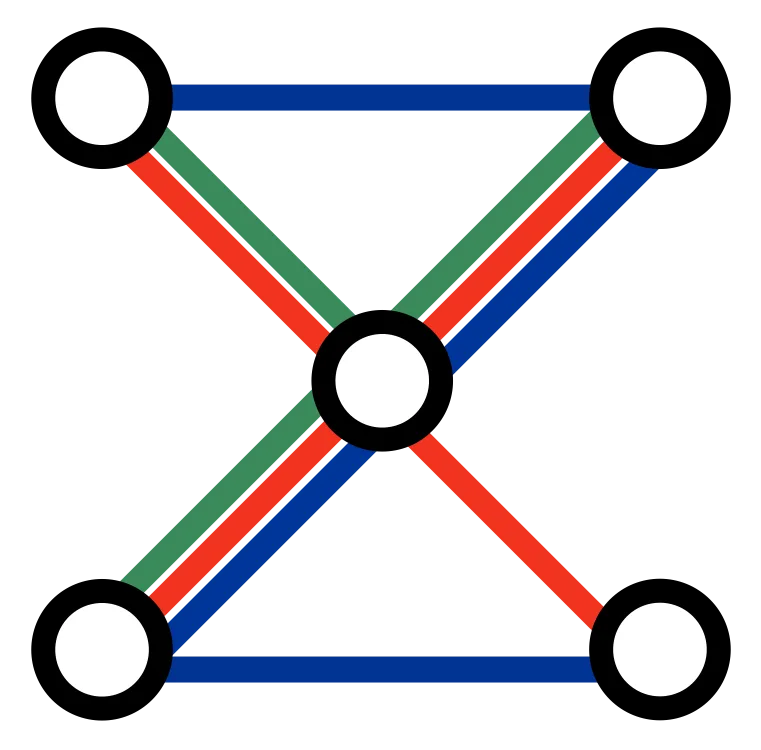

At a high level, OpenClaw, NanoClaw, and NanoBot are the systems we help clients put to work directly. Claude Code, Claude Cowork, and Codex are broader external systems that represent where this class of tooling is heading. They all sit in the same family because they move beyond one-shot prompting and toward delegated multi-step work with tool access, file access, instructions, and reviewable execution.

Here is a practical comparison table to keep the main differences straight:

| System | Best fit | Operating surface | Strength | Caution |

|---|---|---|---|---|

| OpenClaw | Teams that want a broader agentic | Multi-workflow operations across tools and processes | Shared controls, reusable workflows, stronger operational reach | Needs more workflow design and setup discipline |

| NanoClaw | Small teams that want a lighter agentic workbench | Compact multi-workflow setup | Faster rollout with more flexibility than a single bot | Less comprehensive than a broader platform layer |

| NanoBot | Teams with one bounded workflow to automate | Single specialist workflow | Fast, narrow, concrete value | Scope is intentionally limited |

| Claude Code | Engineers working inside repositories and terminals | Repo, shell, files, coding workflows | Strong fit for , inspectable work | Can be too technical without a clear |

| Claude Cowork | Broader knowledge work with long-running tasks | Claude Desktop with local files and task execution | More accessible surface for non-coding tasks | Broader file access and task autonomy need tighter oversight |

| Codex | Teams that want a configurable coding-agent environment | App, CLI, IDE, repo, shell, , | Strong control model around instructions, skills, approvals, and | Still depends heavily on good repo hygiene and review practices |

OpenClaw is best understood as a fuller operating layer. It is useful when a team wants multiple workflows, shared controls, reusable patterns, and a system that can sit closer to day-to-day operations. NanoClaw is the lighter-weight sibling: more flexible than a single specialist bot, but smaller and faster to roll out than a broader platform setup. NanoBot is narrower still. It is the right fit when one workflow such as intake triage, document preparation, or lead qualification deserves a focused agent of its own.

Claude Code is a strong terminal-first coding agent for people who want the agent inside a repository and command-line workflow. Anthropic emphasizes subagents, hooks, permissions, and memory files in its Claude Code documentation, which makes it especially useful when a team wants coding work to live inside a structured, inspectable environment. Claude Cowork uses the same agentic architecture inside Claude Desktop for broader knowledge work. Anthropic describes it as a research preview that runs tasks on your computer, can coordinate sub-agents, uses a VM environment, and supports plugins, scheduled tasks, and file access for longer-running work beyond coding. Codex sits in a similar category on the OpenAI side: a coding agent ecosystem built around agentic coding models, AGENTS.md instructions, skills, subagents, approval policies, and sandboxing modes that range from read-only to dangerous full access.

The pros and cons follow from that positioning. OpenClaw is strong when you want a serious operating layer, but it asks for more setup and workflow design. NanoClaw is easier to adopt and easier to control, but it is not trying to be a company-wide platform on day one. NanoBot is fast and concrete, but intentionally narrow. Claude Code and Codex are excellent for engineering-heavy environments because they work well with repositories, shell tools, instructions, and repeatable workflows, but they can be overkill for non-technical teams if nobody designs the operating model around them. Cowork broadens that access for knowledge work, but because it reaches into local files and long-running tasks, it introduces a different risk profile and requires even more discipline around permissions and oversight.

It is also worth acknowledging the current friction directly. Setup is still harder than it should be. Many of the strongest tools still assume a developer-friendly environment, and a lot of the best patterns today emerge in repositories, terminals, structured files, and scripted workflows before they show up in smoother business interfaces. That can feel like an argument for waiting. We think it is usually the opposite.

The common feature set is what really defines this class of systems. They usually have an instruction layer such as AGENTS.md, CLAUDE.md, folder instructions, or global rules. They often support subagents or specialized workers to split tasks. They can use tools, file systems, connectors, or shell access instead of only generating text. They increasingly support reusable skills, plugins, slash commands, scheduled tasks, hooks, or background execution. And they work best when the environment around them is structured enough that the agent can inspect the current state, apply rules, and leave reviewable artifacts behind.

That matters because a useful agent is not only something you talk to on demand. In many of these systems, agents can also schedule recurring tasks, check whether work has moved, prepare summaries, watch for changes, and push updates back to the team on a regular cadence. In practice that means an agent can send a morning status digest, monitor whether a release checklist was completed, compile competitor changes into a weekly brief, or remind a team when a workflow has stalled. The point is not just interaction. The point is operational follow-through.

Communication surfaces matter too. Some agents live mainly in the terminal or desktop app, but the broader pattern is increasingly about agents that can meet the team where the work already happens. That may be team chat, issue trackers, email, or more private channels such as WhatsApp. Once an agent can receive instructions, ask follow-up questions, and report results in the same channels people already use, it starts to behave less like a novelty interface and more like an additional operating layer around the work.

The constraint layer is usually text. Guard rails are often written as standing instructions, repo-level rules, folder-level instructions, task-specific prompts, skills, plugins, or . That sounds simple, but it is powerful because it is editable. When the agent behaves badly, you can tighten the rules. When the agent misses context, you can add it. When a workflow proves reliable, you can codify the pattern into a reusable skill. Over time, the quality of the system depends less on one brilliant prompt and more on whether the team keeps refining the written operating discipline around it.

Some systems also let agents write down what they learn in files they can revisit later. That might be a project memory file, a scratchpad, a task log, a reusable checklist, or a repository instruction file. Used well, this turns repeated work into a compounding asset. The agent does not just complete a task. It leaves behind a better way to do the next one. Used badly, it can also create stale or contradictory instructions, which is why these learning files still need review, pruning, and ownership.

That is also where the risks show up. A system with broad file access, shell access, internet access, or connector access can move from useful to dangerous very quickly if the surrounding controls are weak. Typical failure modes include editing the wrong files, making destructive changes too early, leaking sensitive data through tools or web access, automating brittle workflows that were never stable to begin with, or creating expensive loops where the team mistakes visible activity for genuine progress.

The mitigations are not mysterious, but they do require discipline. Start with scoped permissions, narrow task boundaries, and explicit owners. Prefer read-only or workspace-limited modes first. Use sandboxing where the tool supports it. Add approvals before destructive actions, network access, or write paths outside the intended scope. Use skills, plugins, and runbooks so the system is not reinventing the workflow from scratch every time. Keep instructions close to the work. Add hooks, tests, validation steps, and human review at the points where mistakes would actually matter. And when you introduce recurring tasks or chat-connected agents, define what they are allowed to send, to whom, how often, and what should trigger escalation back to a human.

This is where the positive case for moving now starts to matter. If you are willing to take a calculated risk, you do not have to wait years for a more polished generation of tools to arrive before you begin capturing value. You can start now with bounded workflows, sensible controls, and a codified operating surface, and benefit from faster learning, lower coordination cost, and earlier institutional experience while others are still waiting for maturity to arrive prepackaged.

That is also why we keep returning to the logic in Why Code-Centric AI Workflows Will Outperform Traditional Business Tools . Codifying the business is not a detour. It is how teams get ahead of the curve. Once work lives in forms that agents can inspect, version, test, and improve, the current generation of tools becomes much more useful right away. The setup burden is real, but so is the advantage of building the operating discipline now instead of joining later when everyone has access to the same polished surface.

If you want help deciding which entry point fits your team, start with our OpenClaw Setup service.