Many people think of AI capability as a choice between different tools or models. In practice, operator matters more. Two people can use the same tool and get very different results because one knows how to structure the work and the other does not.

Moving from prompting to means learning a sequence of skills. Trying to take shortcuts here does not really work. You can switch tools, but you still need to figure out how to use them. Most of these tools also look very similar to users who have not progressed far in that learning yet. This article looks at the skills people need to become effective with agentic workflows and how each one builds on the last. Role-playing games often organize abilities in a skill tree. You start with basic abilities and unlock more advanced ones as you progress. That is a useful way to think about agentic work as well.

For most users, the issue is not tool access. No product produces reliable outcomes on its own. You need to learn how to ask, what to ask for, when to correct, and how to get repeatable results. AI systems often give you exactly what you asked for, even when the answer is wrong. Hallucinations, weak grounding, and false confidence are still common. Skilled operators catch and correct those failure modes consistently.

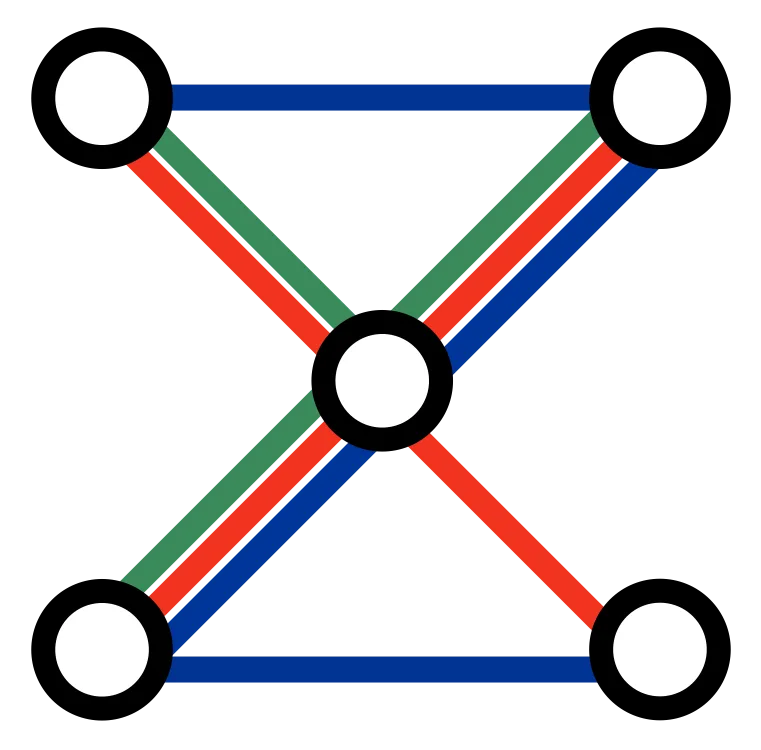

The Skill Tree

Most users start at the bottom of this progression because that is what they already know. They have used ChatGPT or similar tools to ask questions, summarize documents, or draft text. They have also seen the limits: convincing answers that are wrong, missing sources, weak grounding, and outputs that fall apart once the task gets more specific. The next layer starts when users stop treating AI as a one-shot answer machine and start giving it bounded tasks, better context, and clearer review criteria. From there, the progression moves toward repeatable workflows, delegated systems, and changes to how teams organize the work itself.

It is also still early. Many AI users are still building these skills, and much of the market is still experimental. The further you move up this progression, the less polished the experience often becomes. That is especially true for tools designed mainly for users who still operate at the bottom of the skill tree.

At XYZ by FORMATION, we help people and teams adopt agentic workflows with pragmatic coaching focused on getting real work done. We have spent a lot of time testing the skills in this tree, trying different tools, and learning what works in each context and what is still rough.

flowchart TD

subgraph L0["One-Shot Prompting"]

direction LR

A1["Task framing"]

A2["Role prompting"]

A3["Few-shot prompting"]

A4["Source retrieval"]

A5["Citation asking"]

A6["Answer review"]

A7["Hallucination spotting"]

A8["Output specification"]

end

subgraph L1["Simple Agents"]

direction LR

B1["Task decomposition"]

B2["Context packaging"]

B3["System prompts"]

B4["Agent instructions"]

B5["Tool selection"]

B6["File and repo grounding"]

B7["Step planning"]

B8["Artifact review"]

end

subgraph L2["Workflows and Guard Rails"]

direction LR

C1["Workflow design"]

C2["Guard rail design"]

C3["Structured outputs"]

C4["Eval design"]

C5["Retry and fallback logic"]

C6["Approval gates"]

C7["State and memory design"]

C8["Scheduling and alerting"]

end

subgraph L3["Delegation and Control"]

direction LR

D1["Delegation design"]

D2["Role design"]

D3["Supervisor patterns"]

D4["Context handoffs"]

D5["Approval routing"]

D6["Permission design"]

D7["Queue design"]

D8["Escalation paths"]

end

subgraph L4["Organizational Transformation"]

direction LR

E1["Function redesign"]

E2["Workflow ownership"]

E3["Governance"]

E4["Operator training"]

E5["Change management"]

E6["Cost and risk controls"]

E7["Cross-functional integration"]

E8["Capability rollout"]

end

A1 --> B1 --> C1 --> D1 --> E1

A2 --> B2 --> C2 --> D2 --> E2

A3 --> B3 --> C3 --> D3 --> E3

A4 --> B4 --> C4 --> D4 --> E4

A5 --> B5 --> C5 --> D5 --> E5

A6 --> B6 --> C6 --> D6 --> E6

A7 --> B7 --> C7 --> D7 --> E7

A8 --> B8 --> C8 --> D8 --> E8

B2 --> C7

B3 --> C2

B5 --> C4

B6 --> C3

C4 --> D3

C5 --> D8

C6 --> D5

C7 --> D4One-Shot Prompting

This is ordinary ChatGPT-style usage: prompt an article, research a topic, answer a question, summarize a document, brainstorm options. The work is still mostly single-turn or lightly iterative. The model is not being asked to operate for long or manage a process.

Skills in this layer:

- task framing: defining what the model should do and what it should ignore

- role prompting: giving the model a useful stance without pretending that roleplay is a method on its own

- few-shot prompting: using examples to show the pattern you want

- source retrieval: pulling in the right documents, references, and assumptions

- citation asking: requesting traceable support instead of smooth unsupported claims

- answer review: checking whether the output actually answered the question

- hallucination spotting: catching confident fabrication and weak grounding

- output specification: asking for a usable structure instead of a blob of prose

What people usually miss here is that good prompting is not one trick. It is a bundle of small operator habits. This layer buys speed. It does not yet buy reliability or leverage.

Simple Agents

This is where agentic work starts. The user stops asking only for text and starts giving the system bounded jobs: deep research, small scripts, UI prototypes, repo inspection, structured drafts. The shift is from asking for an answer to assigning a job.

Skills in this layer:

- task decomposition: breaking one large ask into bounded steps the agent can actually finish

- context packaging: supplying the files, screenshots, examples, and references the run depends on

- system prompt design: defining durable behavior and priorities before the run starts

- agent instruction writing: telling the agent what good looks like, how far it can go, and when it should stop

- tool selection: choosing the right tools and asking the agent to inspect before it acts

- file and repo grounding: anchoring the work in the actual documents, code, or assets involved

- step planning: making the agent sequence work instead of thrashing across tools

- artifact review: asking for a reviewable script, draft, prototype, or report rather than opaque output

Vibe coding belongs here. It is prototype speed, not production discipline. Andrej Karpathy’s Vibe coding MenuGen captures both sides well: extreme speed early, then friction the moment real engineering concerns arrive.

What people usually miss here is context engineering. The agent is only as good as the job boundary, the instructions, and the materials you give it. This is where tools like Claude Cowork Setup , Codex Setup , Agentic Slides , Proposal and RFP Assistant , Meeting Prep and Decision Pack , and Due Diligence Room Assistant fit.

Workflows and Guard Rails

Now the work gets wrapped in checks, timing, and standards. The operator is no longer chasing isolated wins. They are building a repeatable routine that can survive real usage.

Skills in this layer:

- workflow design: deciding where the agent starts, what it does, and what counts as done

- guard rail design: defining constraints, checklists, and forbidden actions before execution starts

- structured outputs: forcing results into forms that downstream steps can reliably inspect

- eval design: setting rubrics, failure conditions, and test cases instead of relying on taste

- retry and fallback logic: deciding what should retry, what should degrade gracefully, and what should stop

- approval gates: defining where humans review, approve, or reject

- state and memory design: deciding what the workflow should remember between runs and where it should store that state

- scheduling and alerting: deciding what should run on cadence, what should interrupt, and what should wait for review

This is where Closing the Loop and The End of Notifications become directly relevant. It is also where services like Agentic Content Management , Sales Follow-Up Operator , Pipeline Review Copilot , Board Pack Copilot , Exec Briefing Agent , Investor Update Engine , SEO Manager , QA Tester , Security Officer , and Webmaster fit.

What people usually miss here is that reliability comes from design outside the prompt. This layer buys reliability.

Delegation and Control

At this point the problem is no longer one agent and one task. The problem is decomposition, routing, approvals, and handoffs across roles, systems, and people.

Skills in this layer:

- delegation design: deciding what should be delegated, what should stay local, and what should never be autonomous

- role design: decomposing work into specialist agents and human responsibilities

- supervisor patterns: using a coordinating role to inspect, route, and contain work

- context handoffs: managing context transfer across people, tools, and channels without dropping critical state

- approval routing: deciding which steps can act, which need review, and which only advise

- permission design: matching tool and data access to the role instead of granting blanket power

- queue design: routing exceptions, triage, and ownership when work piles up

- escalation paths: deciding what happens when confidence drops, risk rises, or a workflow gets stuck

This is where OpenClaw Setup , Engineering Team Agentic Setup , Agentic Website , Market Intelligence , and Company-Wide Agentic Workflow fit. It is also where Why Code-Centric AI Workflows Will Outperform Traditional Business Tools and How AI Can Pull Dev and Ops Teams Out of DevOps Hell fit cleanly into the argument.

Agentic engineering belongs here. The work is harness design, tool permissions, review surfaces, interface contracts, queues, and failure handling. OpenAI’s Harness engineering: leveraging Codex in an agent-first world and Simon Willison’s How coding agents work are strong references on that shift.

Organizational Transformation

This is the point where AI stops being a productivity layer and starts changing how the work is organized. The question is no longer whether one workflow performs well. The question is whether a function can be redesigned around agentic systems, with clear ownership, controls, budgets, training, and failure handling built into normal operations.

Skills in this layer:

- function redesign: reshaping one business function so the work can move through a governed agentic structure

- workflow ownership: deciding who owns results, failures, budgets, and improvements

- governance: defining controls, reporting, auditability, and exception handling around live autonomous work

- operator training: teaching people how to run, review, and improve these systems

- change management: changing incentives, habits, and interfaces instead of layering AI onto old habits

- cost and risk controls: treating spend, model risk, security, and compliance as operating constraints

- cross-functional integration: aligning handoffs, incentives, and system boundaries across teams instead of within one workflow

- capability rollout: sequencing change and extending the model without losing control

This is where the services stop being about one setup and start being about operating redesign. Small Autonomous Organization and Complex Autonomous Organization turn one function into a governed operating unit. Company-Wide Agentic Workflow changes how a team works together in practice. Company-Wide Agentic Deep Dive is the broader transformation move across multiple functions. Roadmap Agentic Review and Your Agentic Use Case help decide where that redesign should start.

Skills Hidden Inside the 28 Services

FORMATION XYZ offers around 30 services to help companies get started with AI. To make that catalogue easier to navigate, we label services as starter, intermediate, and advanced. Those labels are not product tiers. They are shorthand for the operator skills a team needs in order to use the service well and keep getting value from it after the initial setup.

That is the point of the skill tree. A service is not just something we deliver and walk away from. It is also a transfer mechanism for skills. If a team buys a workflow but never learns how to frame tasks, package context, review output, design , or manage handoffs, then the workflow stays dependent on outside help and eventually degrades.

What we do is as much about coaching and teaching as it is about helping you automate work. Operating a company is a team job. The long-term win is not one clever workflow. It is getting the people across that company to level up their judgment, operating habits, and practical AI skills so the systems keep improving after we leave.

This is also why AI work cannot be treated like a side errand for the youngest person in the room or a novelty delegated to an intern. The useful gains come when the people who own the work learn how to operate the systems around that work. Sales leaders need to understand review loops and handoff quality. Engineering leaders need to understand context, permissions, and harness design. Operators need to understand when to trust a system, when to inspect it, and when to stop it.

So the service catalogue is best read as a set of entry points into the skill tree. Some services help a team build basic prompting and bounded agent skills. Others help teams move into workflows, approvals, evals, and recurring operations. The most advanced services are not really about AI tooling at all. They are about helping a company redesign how work is owned, reviewed, and improved.

What This Means In Practice

The point of this tree is simple: AI value grows as operator skill grows. The real gains come when teams move beyond isolated wins and start building the habits, workflows, and judgment that make good results repeatable.

If your team is still in one-shot prompting mode, that is a perfectly valid place to start. If you can already run bounded tasks with simple agents, the next step is usually to add workflows with guard rails, evals, approvals, and recurring review. And once those patterns start working, the opportunity shifts again: from isolated use cases toward redesigning ownership, controls, and team routines around agentic systems that can be trusted.

That is where FORMATION XYZ fits. We help teams automate useful work, but we also help them build the skills needed to operate that work well. The goal is not to leave you with a clever setup that only works while we are in the room. The goal is to level up your people so the systems become part of how the company works.

Where you start depends on where your team is now and what is most urgent to improve. The hype around OpenClaw is huge right now, and for good reason. People are doing genuinely transformative things with it. But it also comes with real risks and real failure modes. OpenClaw is not just a tool install. It pushes teams straight into delegation, control, and organizational redesign, which means it exposes a lot of the skill tree very quickly.

That is also why the value of something like OpenClaw is not just that you get the tool running. The value is that it gives your team a serious way to get its hands dirty with AI, build operator judgment, and work through the layers of skill that make larger transformations possible. For some teams that is the right place to start. For others, it makes more sense to begin with Claude Cowork for document and research workflows or Codex for repo-centric and technical workflows, then move upward from there. And not every team needs to start with a starter package. You may already have your preferred tools running and be looking for the next step in your agentic journey.

Browse our services . See which ones feel most relevant. Then reach out. We will help scope what matters most, figure out the right starting point, and get your team moving.

Learn More

This article sits inside a broader argument on this site. In Closing the Loop , we make the case that useful AI systems are not just generators. They are loops with checks, corrections, and control points. In The End of Notifications , we push that further and argue that good systems should reduce interruption, not create more of it.

Why Code-Centric AI Workflows Will Outperform Traditional Business Tools explains why structured files, repos, and reviewable environments matter so much when you want agents to do real work. How AI Can Pull Dev and Ops Teams Out of DevOps Hell shows what that looks like in operational practice, where the real gain comes from turning fixes, checks, and into reusable systems.

If you want the speed side of this story, Hyper Agile and What if time to market was measured in hours or days instead of months or years? show what happens when teams can compress the cycle from idea to launch. If you want the cautionary side, The NRC affair shows why newsrooms need skills, not just AI tools makes the case that weak operator judgment does not disappear just because a model is involved.